If you have been switching between Cursor, Claude Code, and the standard VS Code stack, you have probably heard developers talk about Windsurf as a third option that sits somewhere between an autocompletion tool and a full agentic IDE. The pitch is simple: a familiar editor surface with a smarter agent — Cascade — that can read your codebase, plan multi-file changes, and run terminals on its own. This windsurf editor setup guide walks through the install, the configuration that actually matters, and how to build Cascade workflows that survive contact with a real project.

The goal is not a feature tour. By the end, you will have Windsurf installed, Cascade configured against a real repo, a custom rules file shaping its behavior, at least one workflow you can re-run, and an MCP server connected for external tool access. Where Windsurf overlaps with Cursor or Claude Code, the differences are called out so you can decide whether it deserves a permanent slot in your toolchain.

What Is Windsurf and Why Use It?

Windsurf is an AI-native code editor from Codeium that combines a VS Code-style editor with an autonomous agent called Cascade. Unlike pure inline-completion tools, Cascade can read across an entire repository, edit multiple files, run shell commands, and pause for your approval at meaningful checkpoints. In practice, that means you can describe a feature in plain English and watch the agent plan, write, test, and refine it inside the same window where you read code.

The reason developers move to Windsurf usually comes down to two things. First, the agent has tighter integration with the editor than browser-based assistants — diffs appear inline, terminals are real terminals, and file watching is built-in. Second, the workflow system lets you save repeatable agent procedures (release checks, schema migrations, dependency upgrades) as first-class objects you can rerun. That sits between the freeform chat of Cursor and the script-heavy approach of Claude Code’s slash commands.

Windsurf is not magic, however. It is still an LLM driving an editor, with all the usual constraints around context windows, token budgets, and the tendency to confidently produce code that almost works. The setup choices you make early — how you structure rules, what tools you connect, how you scope each Cascade run — determine whether the experience feels like a senior pair programmer or a slightly chaotic intern.

Installing Windsurf

Windsurf ships as a desktop application for macOS, Windows, and Linux. Head to the official Codeium download page, grab the installer, and run it. On first launch you will be asked to sign in with a Codeium account, then prompted to choose between the Free, Pro, or Team plan. The Free tier covers light personal use; serious daily work usually requires Pro for the larger context window and access to higher-tier models.

Once you are signed in, Windsurf imports your VS Code settings if it detects them. That includes themes, key bindings, and most extensions from the Open VSX registry. A handful of proprietary extensions (notably some Microsoft-published ones) are not available because of licensing, so check whether anything you depend on is missing before you commit to it as your primary editor.

# macOS via Homebrew (if you prefer the cask)

brew install --cask windsurf

# Verify the CLI helper installed correctly

windsurf --version

After the install completes, open a real project — not a scratch folder. Cascade’s value compounds with codebase context, so trying it on a single-file demo will give you a misleading first impression. Open a repo with at least a few thousand lines of code, ideally one you know well enough to spot when the agent is wrong.

Configuring Your First Workspace

Before launching Cascade, take five minutes to configure the workspace. Open the settings panel (Ctrl/Cmd + ,) and look for the Windsurf section. The settings that matter most for daily use are the default model, the agent autonomy level, and the file ignore patterns.

For the default model, start with the highest-tier reasoning model your plan allows. Cheaper models save credits but produce noticeably worse multi-file edits, and you will burn the savings re-prompting. The autonomy level controls how often Cascade pauses for approval — “Confirm before each tool call” is a useful default until you trust the agent on a given codebase. Once you trust it, you can switch to “Auto-execute safe tools” so it runs reads and greps without asking.

// .windsurf/settings.json — workspace-level overrides

{

"cascade.defaultModel": "claude-sonnet-4-6",

"cascade.autonomy": "confirm-edits",

"cascade.ignore": [

"node_modules",

"dist",

"build",

".next",

"coverage",

"*.lock",

"*.log"

],

"cascade.maxFilesPerTurn": 12

}

The ignore patterns matter more than they look. Cascade indexes everything that is not ignored, and a large node_modules directory will dilute the embeddings used for code search, leading to worse retrieval. Always add your build output, lockfiles, and any vendored dependencies. If your project uses a non-standard layout — say, a Python virtualenv inside the repo — add that too.

The maxFilesPerTurn setting caps how many files Cascade can edit in a single turn. Twelve is a good ceiling for most projects: large enough for cross-cutting refactors, small enough that you can still review the diff in one sitting.

Understanding Cascade: The Agent Behind Windsurf

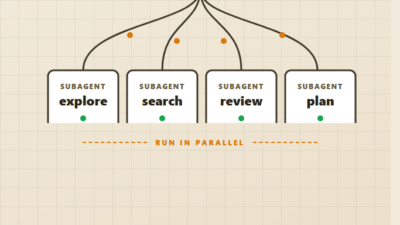

Cascade is the agent loop inside Windsurf. Each turn, it receives your prompt plus a curated slice of the codebase, picks one or more tools to call (read file, search, edit, run shell, web fetch), executes them, and either continues or hands back to you. If you have used Claude Code’s agent loop, the model is similar — but Cascade has more visual surface area for the human in the loop.

The pieces you will interact with daily:

- Cascade chat panel — the main conversation surface. Each message can include file references, command output, and inline diffs.

- Plan mode — for larger requests, Cascade drafts a multi-step plan before touching code. You can edit the plan before it executes, which is the single biggest lever for quality.

- Inline diffs — proposed edits appear in the editor with green/red highlighting. You accept, reject, or edit them before they hit disk.

- Terminal integration — when Cascade runs commands, the output streams into a real Windsurf terminal you can take over.

- Memories — facts Cascade should remember across sessions. Stored per-workspace, automatically injected into context.

The mental model worth holding onto is that Cascade is an agent operating inside the editor, not an editor operating on top of an agent. That distinction shapes how you should prompt it. Vague prompts (“clean up this file”) produce vague edits. Specific prompts that name the file, the constraint, and the success criteria produce reliable changes.

For a deeper look at agent loops in general, the building AI agents with tools, planning, and execution guide covers the underlying patterns. Cascade is one implementation of those patterns wrapped in an editor.

Building Your First Cascade Workflow

Workflows are Windsurf’s answer to the “I keep typing the same multi-step prompt” problem. A workflow is a saved procedure — a name, a description, and a structured prompt template — that Cascade can execute on demand. Think of them as runbooks the agent can follow.

To create one, open the Cascade panel and click the workflow icon, or create the file manually under .windsurf/workflows/. Each workflow is a Markdown file with frontmatter:

---

name: pre-pr-check

description: Run before opening a PR — lint, tests, type check, and stage diff

auto_execute_steps:

- read_file

- run_command

---

# Pre-PR Check Workflow

Run this workflow before I open a pull request. The goal is to catch issues

that would otherwise come up in CI or in code review.

Steps:

1. Run the project's lint command. If it fails, attempt one round of fixes

for any auto-fixable rules. Do not fix anything that requires manual

judgment — flag those for me instead.

2. Run the type checker. If types fail, surface the first three errors

with file paths and proposed fixes; do not edit code yet.

3. Run the test suite. If tests fail, identify whether the failures are

pre-existing or introduced by the current branch.

4. Summarize the diff against the main branch in 5 bullets or fewer,

focused on behavior changes rather than file lists.

Output format:

- Lint: PASS or specific failures

- Types: PASS or specific failures

- Tests: PASS or specific failures

- Diff summary: 5 bullets max

Save it, then invoke it from Cascade with /pre-pr-check. The agent reads the workflow, follows the steps, and pauses for approval at each tool call (or auto-runs the tools you whitelisted). What makes this powerful is repeatability — the same procedure produces consistent output across days and projects.

A few workflows worth building early:

- Codebase tour — walks a new repo by reading the entry points, package manifests, and main config files, then summarizes the architecture.

- Bug repro — given a bug description, finds the relevant module, writes a failing test, and stops before fixing it (so you can verify the repro is real).

- Dependency upgrade — runs the package manager’s upgrade command, summarizes breaking changes from changelogs, and proposes minimal patches.

- Migration scaffold — generates a new migration file with the standard project boilerplate, populated from a plain-English schema description.

Workflows should be specific enough to be useful but general enough to reuse. If a workflow only ever applies to one task, it is just a long prompt. The sweet spot is a procedure you would run at least weekly.

Customizing Cascade with Rules and Memories

Rules and memories shape how Cascade behaves without you having to repeat yourself in every prompt. Rules are deterministic instructions that always apply; memories are facts the agent should keep in mind. Both live in .windsurf/ and are versioned with your project.

Rules go in .windsurf/rules.md. The format is plain Markdown with section headings, and Cascade includes the file in every prompt automatically. Keep it short — under a few hundred lines — because everything in here costs tokens on every turn.

# Project Rules for Cascade

## Code style

- TypeScript strict mode is non-negotiable. Never use `any`; prefer `unknown` with narrowing.

- Tests live next to the file they test as `*.test.ts`.

- Public APIs are documented with JSDoc; internal helpers are not.

## Patterns to follow

- All HTTP handlers go through the `withAuth` and `withRateLimit` wrappers.

- Database access is through the `db/queries` module; raw SQL outside that module is a smell.

- New features get a feature flag from `lib/flags.ts` until they are stable.

## Patterns to avoid

- Do not introduce new dependencies without asking — we keep the bundle small.

- Do not rewrite working code "for clarity" without an explicit request.

- Do not add `console.log` to committed code; use the logger in `lib/log.ts`.

## When unsure

- Ask before making architectural decisions.

- Prefer surfacing 2–3 options with trade-offs over picking one silently.

Memories are different — they capture facts Cascade should know but that change over time. Examples: “the staging database is read-only on weekends”, “the marketing team owns /landing/* routes”, “Sarah is the reviewer for auth changes”. You add them through the Cascade panel as the project evolves, and they stick around across sessions.

The trap to avoid is over-stuffing rules. Every line in rules.md is paid for in tokens on every turn, so push project-wide conventions there and keep one-off context in the prompt. If you find yourself writing rules for “what this specific feature does”, that probably belongs in code comments or the README instead. The same principle applies in other AI editors — see the Cursor rules and .cursorrules guide for a parallel discussion in Cursor’s ecosystem.

Connecting MCP Servers and External Tools

Windsurf supports the Model Context Protocol, which means Cascade can talk to external tool servers — databases, GitHub, Linear, browser automation — without you wiring up custom integrations. To connect one, edit ~/.codeium/windsurf/mcp_config.json (or the workspace equivalent) and add a server entry.

{

"mcpServers": {

"github": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {

"GITHUB_PERSONAL_ACCESS_TOKEN": "${GITHUB_TOKEN}"

}

},

"postgres": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-postgres",

"postgresql://localhost:5432/myapp_dev"]

}

}

}

Restart Windsurf, and Cascade will list the new tools the next time you open the chat. From there, you can ask things like “find the last three open PRs that touched the auth module” or “describe the schema of the orders table” and Cascade will route through the MCP server. For the underlying protocol details, the Claude Code MCP servers tutorial covers the same protocol in another agent’s context — the configuration is largely transferable.

Be deliberate about what you connect. Every additional MCP server expands Cascade’s tool surface, which both helps it accomplish more and increases the risk of it picking the wrong tool. Start with one or two well-scoped servers (a code-host like GitHub, a database, maybe a docs search) and add more only when a workflow demands them.

When to Use Windsurf

- You want an editor-first experience with the agent integrated directly into the file tree, terminals, and diffs.

- Your projects are large enough that codebase-wide context retrieval pays off — typically anything past 50k lines or with non-trivial cross-module dependencies.

- You frequently run repeatable multi-step procedures and want them as first-class workflows instead of pasted prompts.

- You are comfortable in a VS Code-style editor and do not want to switch to a terminal-first agent.

- Your team standardizes on shared rules files committed alongside the code so the agent behaves consistently across contributors.

When NOT to Use Windsurf

- You need every Microsoft-published VS Code extension; some are unavailable due to licensing.

- Your workflow is dominated by terminal-native operations and you prefer agents like Claude Code or Aider that live in the shell.

- You only edit one or two files at a time and rarely need cross-file reasoning — a lighter inline-completion tool is cheaper and faster.

- Your projects are highly regulated and you cannot send code to a third-party model. Windsurf supports some local-model setups but the experience is best with hosted frontier models.

- You are evaluating IDE alternatives and want to compare directly — pair this guide with the Cursor IDE setup guide and the Cursor vs Claude Code comparison for context.

Common Mistakes with Windsurf

The most frequent mistake is treating Cascade as a freeform chatbot. Vague prompts like “make this better” produce vague results. Naming the file, the constraint, and the success criteria — “in auth/middleware.ts, replace the manual JWT verification with the jose library, preserve the existing error contract, and update tests” — produces reliable edits. The agent is good; you still need to be specific.

The second mistake is letting rules.md grow unbounded. Every rule is paid for in tokens on every turn, so a 1,000-line rules file silently slows every interaction and leaves less context for the actual code. Keep rules to genuinely project-wide conventions and push feature-specific notes into the prompt or the README.

The third mistake is granting full autonomy too early. Auto-executing every tool call feels productive until the agent runs a destructive command in a moment of confusion — a misplaced rm, a migration that drops a column, a force-push to the wrong branch. Start in confirm-mode, build trust on a specific repo over a few weeks, and only then loosen autonomy on the categories you have observed working safely.

The fourth mistake is ignoring the indexing setup. Cascade’s quality depends on what is in the index, and skipping the ignore patterns means your node_modules and build output are competing with your real source code for retrieval slots. Spend the five minutes to write a tight ignore list. The difference is visible immediately.

Real-World Setup Scenario

A practical scenario: a backend engineer joining a mid-sized TypeScript monorepo with a few hundred files across an API service, a worker service, and a shared package. The engineer wants Windsurf to be useful by the end of the first day, not after weeks of prompt tuning.

Day one looks like this. After install, the engineer opens the monorepo and writes a .windsurf/rules.md with the team’s existing conventions — strict TypeScript, Vitest tests next to source, the standard error-handling pattern, the rule that new dependencies require team approval. They configure ignore patterns to exclude node_modules, dist, and the generated GraphQL types directory. They connect a GitHub MCP server for PR lookups and a Postgres MCP server pointed at the local dev database.

By day three, they have built two workflows: a “codebase tour” that runs once per package to surface architecture summaries, and a “pre-PR check” that runs lint, types, and tests before they push. The tour workflow paid for itself the first time — it surfaced an internal client library nobody mentioned in onboarding docs. The pre-PR workflow caught a type error before it reached CI. Neither workflow was complicated; they were just consistent enough to use without thinking.

The trade-off the engineer notices is honest: Cascade is excellent at multi-file edits within the conventions encoded in rules, and noticeably worse at architectural decisions where the right answer requires team context that has not been written down. That is the same limitation every AI agent has — the rules file is the bridge between the agent and the team’s shared understanding.

Conclusion

A good windsurf editor setup takes about an hour: install, configure the workspace, write a focused rules file, build one or two workflows, and connect a single MCP server. From there, you grow the configuration as you discover what you actually run repeatedly. The point is not to maximize features; it is to make the agent useful for your codebase without burning context on irrelevant patterns.

If you are still deciding which AI editor to commit to, read the AI tools for coding productivity overview and the AI code assistants compared breakdown alongside this guide. The right answer depends on whether you want an editor-first experience (Windsurf, Cursor) or a terminal-first one (Claude Code, Aider) — and on which agent’s defaults match how your team already works.

2 Comments